For decades, cooling systems management in data centers was viewed as a facilities function, essential to operations, but largely tactical in scope. That framing no longer reflects today’s reality.

The rapid acceleration of artificial intelligence (AI), high‑performance computing (HPC), and rising rack densities have fundamentally reshaped the role of thermal management. Cooling systems now protect some of the most capital‑intensive and business‑critical assets in the modern economy. Even short‑duration thermal instability can cascade into equipment damage, service‑level agreement violations, regulatory exposure, and reputational risk.

At the same time, sustainability expectations and energy economics are redefining how cooling performance is evaluated. What was once measured in room temperature is now measured in resilience, efficiency, compliance, and real‑time operational visibility.

Yet across the industry, the limiting factor is not instrumentation density. It is measurement confidence.

What Should Actually Be Measured?

Many facilities operate with a growing number of sensors, dashboards, and alarms, yet operators and executives alike often lack clarity on whether they are measuring the variables that truly matter.

Should performance be assessed at the room level, at rack air intake, or across supply and return temperature differentials? How many sensing points provide meaningful insight before data becomes noise? How should temperature, pressure, and flow data be combined to reflect true cooling system performance?

These questions surface frequently as organizations modernize legacy infrastructure. But they often distract from a more fundamental principle: cooling performance is not defined by static setpoints. It is defined by the reliable delivery of thermal capacity to compute equipment – precisely when and where it is needed.

Room averages can conceal localized thermal stress. Overcooling can mask distribution imbalances. And temperature alone offers limited insight into whether chilled water systems are operating efficiently or compensating for inaccuracies elsewhere.

Air temperature indicates symptoms. Accurate flow, pressure, and thermal energy measurement reveal system behavior, capacity utilization, pumping efficiency, and hidden operational risk.

What executives require is not more data, but assurance: confidence that cooling capacity is real, scalable, and verifiably aligned with operational demand, without adding unnecessary complexity.

Data Volume Without Operational Assurance

In many environments, uncertainty triggers a predictable response: add more sensors.

The result is often alarm fatigue.

Front‑of‑rack temperature probes, HVAC sensors, pressure transmitters, and standalone devices generate a continuous stream of notifications. Without disciplined measurement strategies, contextual analytics, and validation logic, critical insight is quickly buried beneath noise.

More problematic is the assumption that redundancy alone ensures reliability.

In one facility, multiple flow measurement points were installed on a critical chilled water loop. Each device reported materially different values. The control system averaged the readings under the assumption that redundancy would improve accuracy. Instead, inaccurate data drove sustained over‑pumping and unnecessary energy consumption, resulting in nearly six figures of avoidable annual cost.

Redundancy without validation does not increase resilience. It amplifies uncertainty.

True operational confidence depends on measurement architectures that agree, cross‑validate, and reflect physical reality – providing trustworthy data for both control and decision‑making in uptime‑sensitive environments.

Sustainability, Water Stewardship, and the Compliance Horizon

Cooling performance is no longer evaluated solely through the lens of energy consumption.

Water usage, waste heat recovery, and sustainability outcomes are increasingly subject to regulatory oversight and public scrutiny. In Europe and other regions, efficiency frameworks now require structured reporting of performance indicators such as total water input, heat rejection efficiency, and energy‑related KPIs aligned with recognized data center standards.

As sustainability commitments transition from aspiration to governance requirement, measurement integrity becomes essential.

Water‑related discussions frequently expose another persistent challenge: uncertainty. Operators evaluate evaporative versus closed‑loop systems, assess blowdown strategies, and consider local water constraints. Yet in many cases, precise volumetric flow and pressure measurement at intake, distribution, and return points remains incomplete or inconsistent.

Without reliable flow, temperature, and pressure data, sustainability strategies remain theoretical, and efficiency claims become difficult to defend.

You cannot optimize what you cannot measure. And you cannot credibly report what you cannot verify.

Integrated measurement architectures – linking temperature, pressure, and flow data into control, monitoring, and reporting platforms – allow cooling to evolve from a cost center into a controllable efficiency lever, with clearer accountability across operations, engineering, and Environmental Social Governance (ESG) teams.

Designing Measurement into System Architecture

Consider a recent large‑scale data center campus expansion built around a hybrid cooling strategy. Passive heat exchange supported operations during colder periods, while chillers provided supplemental capacity during warmer conditions. A heat‑recovery system leveraged the primary cooling loop as an energy sink to supply adjacent facilities, improving site‑wide efficiency.

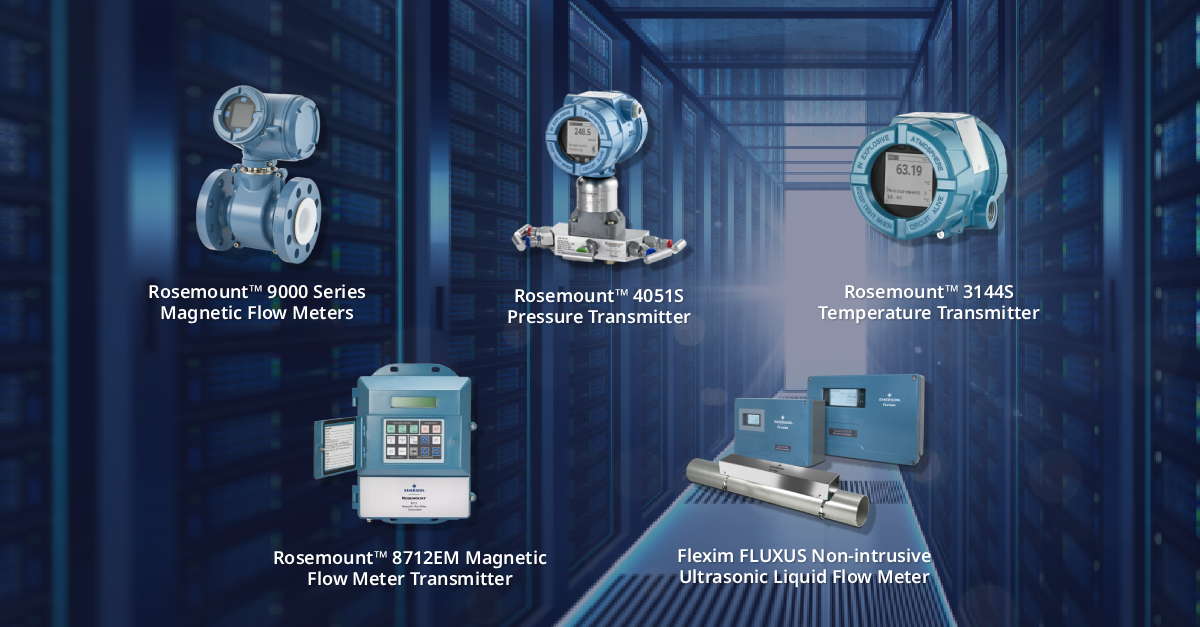

The design required reliable temperature, pressure, and flow measurement across primary headers and secondary circuits carrying both water and glycol mixtures. Measurement accuracy was essential – not only for control performance, but also for validating energy efficiency and water usage.

Rather than treating instrumentation as an afterthought, measurement was incorporated into the system architecture from the outset. Temperature transmitters were applied at critical supply and return points. Pressure measurement supported pump efficiency monitoring and fault detection. Flow measurement provided continuous verification of cooling capacity delivery and thermal energy transfer.

In some areas, installation constraints, operational risk, and uptime requirements influenced how measurement was deployed. Solutions that minimized mechanical modification, reduced commissioning effort, and avoided disruption to live cooling systems played an important role in maintaining schedule and reducing risk.

The result was a measurement strategy that improved transparency without adding unnecessary complexity – and one that could be replicated across multiple buildings as the campus expanded.

The takeaway is clear: when measurement is designed into system architecture – rather than retrofitted after issues arise – it simplifies infrastructure and improves confidence across the full asset lifecycle.

From Reactive Instrumentation to Proactive Assurance

A familiar pattern persists in the industry. Measurement investment often follows incidents.

Leak detection is installed after a leak. Additional temperature monitoring follows an overheating event. Flow validation becomes a priority only after energy waste is uncovered.

This reactive model is increasingly incompatible with modern data center requirements.

As AI workloads intensify and rack densities rise, thermal margins narrow. At the same time, regulators, investors, and customers expect transparent, verifiable performance and sustainability reporting.

Forward‑looking operators are adopting a more deliberate approach:

- Strategic placement of temperature, pressure, and flow measurement on primary cooling infrastructure

- Measurement architectures that support validation, not just redundancy

- Continuous verification of measurement performance over time

- Deployment approaches aligned with zero or low‑disruption operations

- Automated integration of KPI data into energy, water, and ESG reporting frameworks

- Measurement strategies that support both control optimization and executive decision‑making

Cooling resilience is not achieved by adding sensors indiscriminately. It is achieved by measuring the right variables, in the right locations, with the right level of accuracy and confidence – using approaches that support availability and long‑term scalability.

The Executive Imperative

In the coming decade, data center competitiveness will increasingly depend on infrastructure transparency.

The most resilient facilities will not necessarily be those operating at the lowest temperatures. They will be those with the clearest understanding of how heat, water, and energy move through their systems – and the confidence that their measurement strategy reflects physical reality, supports rapid scaling, and stands up to operational and regulatory scrutiny.

Cooling is no longer simply thermal management.

It is business continuity.

It is energy economics.

It is regulatory readiness.

It is risk governance.

And increasingly, it is a source of strategic differentiation for operators under pressure to scale faster, prove efficiency, and reduce avoidable risk.

About the Author: Aaron Noviski leads strategic growth efforts for Emerson’s Measurement Solutions business in the North American data center market. He brings more than 25 years of experience across data center infrastructure, colocation, cloud, and hyperscale markets, partnering with owners, EPCs, engineering firms, OEMs, system integrators, and operators to support next-generation critical infrastructure. For questions or comments about this article, please contact [email protected].