How many of us dread being asked for data from a while back when our data management systems are largely spreadsheets scattered among different people? If this sounds familiar and you’re responsible for sharing this type of data at your manufacturing or production facility, this article may be for you.

In a Tank Storage article, Four Strategies to a Stronger Data Foundation, Emerson’s Mark Graham and Dapo Badmus share a case study of a hydrocarbon midstream company seeking vast improvements in the way they collected, stored and collaborated with the data needed for their operations.

In a Tank Storage article, Four Strategies to a Stronger Data Foundation, Emerson’s Mark Graham and Dapo Badmus share a case study of a hydrocarbon midstream company seeking vast improvements in the way they collected, stored and collaborated with the data needed for their operations.

The status quo for this midstream producer was potentially very costly.

Discrepancies in record keeping and product tracking had the potential to result in claim settlements in the millions of dollars.

They chose to work with Emerson’s Operational Certainty Consultants for Data Management in a digital transformation initiative to:

…design and implement strong data analytics to comprehensively redesign how they handled and analysed data.

Shortly after project completion, this transformation:

…helped them avoid a $2.5 million claim in the first few months.

The four key strategies Mark and Dapo describe for this successful project include:

- Improve data quality

- Centralize data

- Contextualize data

- Establish strong data policies

I’ll highlight the first strategy and invite you to read the article for the other three key strategies.

Pre-digital transformation operations were challenging.

Whenever a customer filed a claim that required tracking quantity and locations of product at a certain time, the midstream organisation’s management would have to spend many hours looking back through old data – stored in spreadsheets and the data historian – to see if they could reconstruct the timeline and track the product.

Data quality issues were abundant.

Lack of validation led to records that were inconsistent or incomplete, leaving investigators with missing or misplaced data. Because any current state was based on previous states, when they were missing records in the middle, the organisation effectively had no records at all. This created situations where management was unable to definitively prove that a stated volume of product was delivered to an expected storage location within the contractual time period. Without adequate proof to support the correct delivery, the organisation had no way to dispute customer claims – almost always requiring a settlement.

Emerson’s Data Integration team helped by designing:

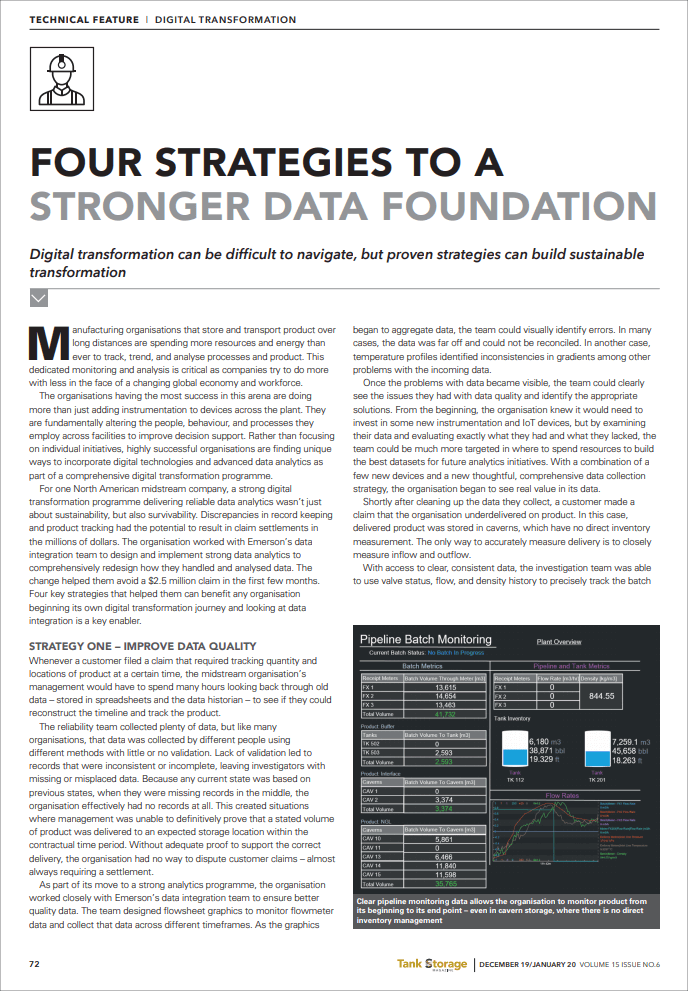

…flowsheet graphics to monitor flowmeter data and collect that data across different timeframes. As the graphics began to aggregate data, the team could visually identify errors. In many cases, the data was far off and could not be reconciled. In another case, temperature profiles identified inconsistencies in gradients among other problems with the incoming data.

Once the problems were made visible, the data collection processes could be fixed, and the data cleansed.

With access to clear, consistent data, the investigation team was able to use valve status, flow, and density history to precisely track the batch from its beginning to its end point. Management was able to show the customer exactly when, where, and how much product flowed into their facility and defend against a nearly $2.5 million claim.

Read the article for Mark’s and Dapo’s description of the other three key strategies led to these business performance improvements.

Visit the Operational Certainty Consulting – Data Management section on Emerson.com to drive performance improvements in data management by enabling data capture, optimization, visualization, and satisfying reporting needs. You can also connect and interact with other data management experts in the IIoT & Digital Transformation group in the Emerson Exchange 365 community.